Real-time graphics in games and visualizations can achieve realism very effectively by approximating physical lighting. Even a basic emulation of soft indirect light bounce and darkened corners goes a long way to make a simple scene feel “present”.

Computers typically use the radiosity algorithm to calculate lighting in a scene. Common hardware is getting closer and closer to doing this in real time, per frame, but for now a typical commodity 3D engine like Unity or UE4 just precomputes lightmaps – storing the amount of light received by every part of a given object surface.

WebGL graphics implementations (usually based on ThreeJS) do not have a “native” way of doing this: lightmaps are baked by another engine like Unity, or using an authoring tool like Blender. I wanted to build a cheap and quick lightmap baker that would be able to work “on the fly” right there in the browser, without needing to export the scene to a separate tool. This felt very useful for coding quick sketches with dynamically generated geometry.

My baker library goes through every lightmap texel (i.e. patch of scene surface) and performs a very simple light probe: rendering the scene into a very low-res half-cubemap and then averaging the cubemap data into the final single texel value. Once a full scene pass is done, the output lightmap texture is applied to the scene and another pass is repeated. Each pass effectively adds on a photon bounce, and even surfaces in shadow end up receiving indirect contribution from lit surfaces.

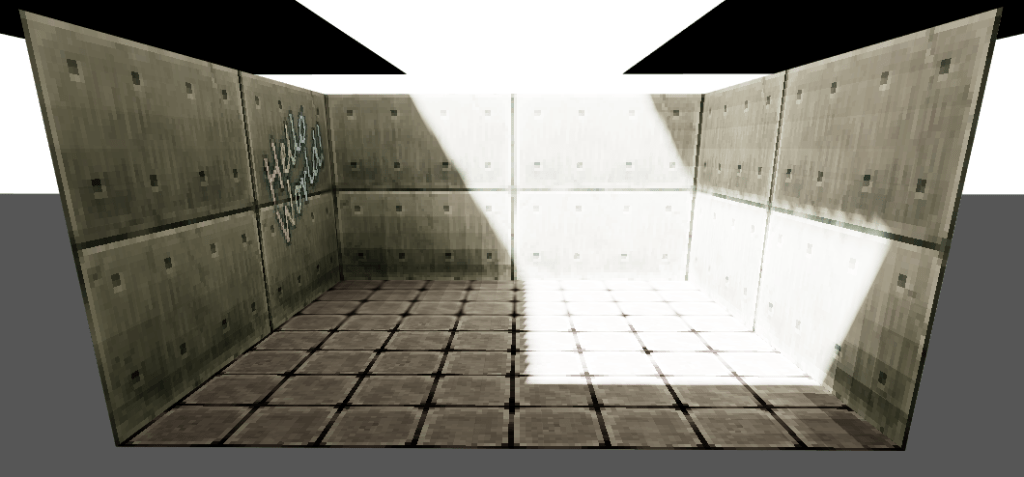

This approach produces surprisingly convincing results! Definitely good enough for a draft sketch in code. It uses the same vertex shaders and GPU rendering pipeline as the final view, and supports emissive area lights “for free”.

In order to enable the “everything in the browser” philosophy I also needed to add a rudimentary UV unwrapper. My proof-of-concept finds coplanar islands of triangles (i.e. surface n-gons), computes the bounding boxes in tangent space (with some heuristics for how the contents are rotated) and then lays out all those bounding boxes using the popular Potpack library. This is far from being the most space-efficient and flexible layout algorithm but works well enough for typical small in-browser WebGL scenes.

The ability to generate lightmaps programmatically on a live scene is very convenient for advanced workflows. Generating a lightmap in layers and combining those at render time allows switching individual lights on and off and modulating their colour. I even experimented with faking animated indirect lighting in real-time: in the below screenshots, 3-4 lightmap “keyframes” are generated for the roof opening animation, and the final rendered scene interpolates those keyframes to match the current frame of the animation. As a result the scene transitions in real-time from being dimly lit to being flooded with sunlight.

This proof-of-concept was eventually packaged as the official @react-three/lightmap module and expanded to support Suspense and other workflow niceties. The original proof-of-concept code is also open source and available on GitHub.

You must be logged in to post a comment.